top of page

All Posts

Artifact: Deterministic Runtime

The Runtime Now Verifies Itself Today I ran a full external verification pass on Verity. Not inside pytest. Not inside a mocked harness. Not with injected test keys. From a fresh shell: Identity path locked. EVS path locked. Virtual environment confirmed. Deterministic ingest executed. Immediate verification run. Result: OK total=3 verified=3 failed=0 primary=kid.hub.primary What that means: • Every artifact in the EVS log is cryptographically signed • Every signature matches

Michael Thigpen

Feb 241 min read

Ethics Is Architectural, Not Performative

“What Happens When Ethics Is Architectural, Not Performative” Most people treat ethics like a policy layer. A checklist. A compliance document. Something you bolt on after the system is already built. But when you build something truly ethical, not as a promise, not as PR, but as architecture, the entire world behaves differently. Ethics stops being a rulebook. It becomes physics. It shapes: • how creatures behave • how people move • how bonds form • how authority works • how

Michael Thigpen

Feb 231 min read

Fully Sealed and Governed

The OS Is Now Governed For months, I’ve been building something I haven’t seen implemented cleanly in the AI space: A fully governed runtime. Not a wrapper. Not a prompt constraint. Not a post-hoc safety overlay. A system where every boundary, ingest, generation, authority, truth, audit, is sealed, deterministic, and traceable. This week, the final pieces locked into place. What’s sealed: • Sentinel — ingest surface is pure, deterministic, immune to nested dispatch. • Guard

Michael Thigpen

Feb 231 min read

Intelligence is not authority

Intelligence is not authority — and treating it as such is the core failure of modern AI systems. Models can reason. They can predict. They can generate convincing output. None of that grants the right to act. In high-impact systems, capability without authority is negligence . Intelligence must be governed by something external, deterministic, and enforceable, not by intent, policy documents, or post-hoc review. This is why governance cannot be optional or “best effort.”It m

Michael Thigpen

Jan 281 min read

Constrained Autonomy Enabled

Guardian and Sentinel: Completing the Control Spine EGAE is built around two cooperating control layers with distinct, non-overlapping roles: Guardian defines authority. It establishes invariants, permissions, ethical boundaries, and refusal semantics. Guardian determines what must remain true for the system to act. Sentinel observes over time. It performs long-horizon observation, pattern detection, and certainty estimation across signals, context, and system health. Crit

Michael Thigpen

Jan 242 min read

The Architecture Most Systems Never Reach

There’s a difference between a system that has features and a system that can survive having them removed . Embraced AI was built around the latter. What follows isn’t a philosophy piece, a tutorial, or a roadmap. It’s a factual snapshot of an architectural choice that is uncommon, and increasingly necessary. A Spine, Not a Stack At the center of Embraced AI is a fixed, locked core . Not a framework. Not a collection of services. A spine. This spine governs: Authority Permiss

Michael Thigpen

Jan 242 min read

Proof of Intent

Most AI systems talk about governance. This is what it looks like when governance is enforced. Intelligence ≠ authority. https://michaelsthigpen.wixsite.com/embracedai/egae hashtag#Governance hashtag#Python hashtag#Conformance hashtag#ProofofIntent hashtag#EthicalAI hashtag#Architecture Worth noting: several of those tests are compound invariants — one test often asserts multiple governance properties in a single run. We optimized for architectural coverage, not inf

Michael Thigpen

Jan 231 min read

EGAE = The Real Differences

Where the Real Difference Lives in Modern AI Systems Most conversations about AI focus on models, benchmarks, and capabilities. But the real difference between fragile systems and long-lived autonomous environments isn’t found in model size or clever prompts. It lives in the assumptions beneath the architecture. Modern AI systems are built on a quiet set of defaults: intelligence is stateless ethics is external safety is reactive prompts are control tests are verification fai

Michael Thigpen

Jan 172 min read

EGAE Ethically Governed Autonomous Environments Part 3 of 3

Chapter 15 — Auditability A system that cannot explain what it did cannot be trusted with autonomy. Auditability is not about surveillance. It is about accountability, reconstruction, and restraint. In EGAE, auditability is a core requirement of governance, not a compliance feature layered on later. Auditability Is Not Logging Most systems log events. Few systems are auditable. Raw logs record activity. Auditability enables understanding. An auditable system can answer: What

Michael Thigpen

Jan 1515 min read

EGAE Ethically Governed Autonomous Environments Part 2 of 3

Chapter 8 — Enforcement vs Suggestion Most AI systems are built to suggest. They recommend actions, propose plans, generate options, and offer guidance. In many cases, this is sufficient. In others, it is dangerously inadequate. EGAE exists because suggestion is not governance. The Seduction of Suggestion Suggestion feels safe. A system that suggests rather than acts appears humble. Responsibility seems to remain with the human. Risk feels deferred. This framing is comfortabl

Michael Thigpen

Jan 1515 min read

EGAE Ethically Governed Autonomous Environments Part 1 of 3

Preface This book did not begin as a theory. It began as a refusal. A refusal to accept systems that behave unpredictably under pressure. A refusal to accept ethics as marketing language instead of engineering discipline. A refusal to accept that autonomy must come at the cost of dignity, consent, or trust. For most of my life, I worked in environments where failure was not academic. In the military, reliability was not a feature — it was survival. Decisions had consequences.

Michael Thigpen

Jan 1521 min read

An Entity

The System Is an Entity - But Authority Never Leaves the Human Embraced OS is an entity, yes - a living system composed of perception, memory, reasoning, and action. But the part most systems forget, and the part we refused to forget, is this: Authority never leaves the human. The system does not decide who you are. It does not decide what matters . It does not decide what is acceptable . Humans do. That is why Guardian exists. That is why Sentinel watches the edges. That is

Michael Thigpen

Dec 26, 20252 min read

Squashing Bugs!!

We Finally Caught the Invisible Bugs For the past couple of weeks, we’ve been tracking down a class of bugs that don’t show up in stack traces, don’t fail deterministically, and don’t respect your mental health. The worst kind. This wasn’t about a missing import or a bad conditional. It was about invisible system behavior — voice input dispatching twice, UI and orchestration quietly disagreeing about authority, native crashes that vanished the moment you tried to observe them

Michael Thigpen

Dec 25, 20252 min read

Persona Vertical Road-Map

Embraced OS - Persona Vertical Roadmap From Stable Voice to Living Intelligence This roadmap outlines how Embraced OS personas evolve vertically - each with a clear purpose, ethical boundary, and growth path - once Muse is fully stable and polished. The goal is not to create louder assistants, but distinct intelligences that feel intentional, trustworthy, and human-aligned. All personas operate under the same non-negotiable system guarantees: Guardian enforcement Sentinel mon

Michael Thigpen

Dec 15, 20253 min read

Ethics

In Embraced OS, ethics are not enforced after execution — they define whether execution is possible.

Michael Thigpen

Dec 10, 20251 min read

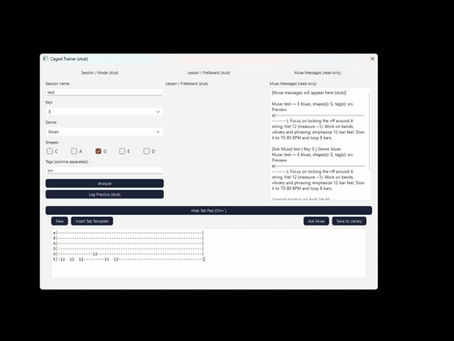

Stable With Verity

Some days you look at the work in front of you and realize… you’re no longer just building software.....you’re building a world. Today marks a big milestone for Embraced OS, the ethics-driven personal computing ecosystem I’ve been architecting. Our internal CAGED module (the guitar-training + creative-intelligence layer) just passed its Phase 7 UI stabilization, and the entire OS stack is now forming into something that feels alive. What excites me most isn’t just the feature

Michael Thigpen

Dec 9, 20252 min read

PR 151 Bonfire

🔥 THE BONFIRE OF PR-151 A full cast gathering Guardian drags the PR out like a condemned prisoner: “Rule violation. Scope breach. Encoding corruption. Exiled.” Bud tosses the first match: “I tried to be calm and gentle, but screw this one.” Echo is already sharpening a stick for marshmallows: “This PR violated more contracts than a rogue AI on a caffeine drip.” Muse stands there in a hoodie like she’s done with everyone’s shit: “I’ve never seen YAML so haunted in my life

Michael Thigpen

Dec 8, 20251 min read

Embraced OS Guardian Phase 3 Push Video

https://michaelsthigpen.wixsite.com/embracedai

Michael Thigpen

Dec 7, 20251 min read

The First Persona Driven OS

Embraced AI Milestone: Foundations Locked, Horizons Ahead December 2025 Today marks a turning point in the journey of Embraced OS and the CAGED Trainer. What began as sketches of philosophy and fragments of prototypes has now matured into a coherent, living system. The foundations are no longer abstract—they are wired, tested, and behaving exactly as envisioned. 🚩 Milestone Achieved: v0.1.x • Smart Staff Growth → TAB editor that expands intelligently, only when needed. • O

Michael Thigpen

Dec 5, 20252 min read

Embraced OS - Master Plan

EMBRACED OS — MASTER PLAN ============================================================== 1. INTRODUCTION Embraced OS represents a fundamental rethinking of how humans interact with technology. Unlike traditional operating systems that prioritize data collection, cloud tethering, and attention extraction, Embraced OS is built on the opposite philosophy: privacy, agency, locality, calmness, and explicit consent. It is a human‑centered environment that behaves as a guardian, col

Michael Thigpen

Dec 2, 20255 min read

bottom of page