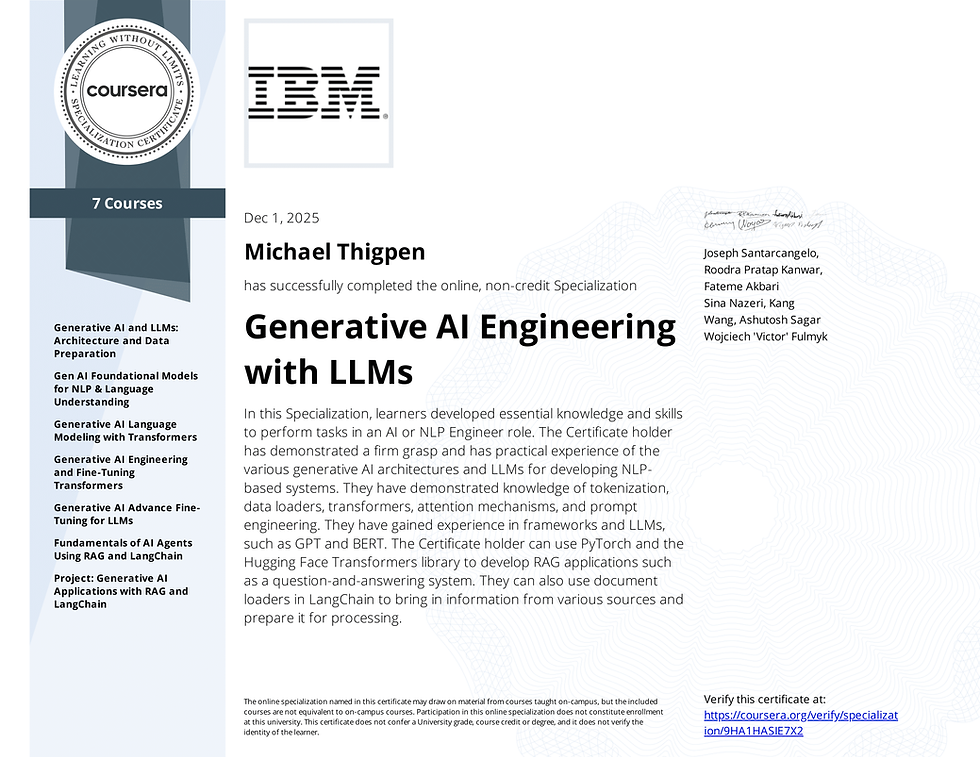

Generative AI Engineering

- Michael Thigpen

- Dec 1, 2025

- 1 min read

Just wrapped up IBM’s Generative AI Engineering with LLMs specialization — a deep, end-to-end journey through transformers, fine-tuning, RLHF, embeddings, vector search, and retrieval-augmented generation.

What made this milestone different is that I didn’t just “take the courses.”

I integrated everything directly into my active engineering ecosystem.

Over the last several weeks, I’ve been building out a modern AI operating layer — Embraced OS — a modular environment where LLMs, agents, and real-time RAG pipelines work together across security, automation, and creative workflows.

This specialization added the final pieces:

tokenization at scale

attention mechanisms and transformer internals

supervised fine-tuning and LoRA

reward modeling

PPO vs DPO

full RAG architecture with LangChain

vector DBs + embedding orchestration

real-world QA systems on local documents

The capstone forced me to build a complete RAG system from scratch — document loaders, splitters, embeddings, a vector store, a retriever, and a functional QA bot.

I built it locally, outside the sandbox, and wired it into my own workflow. That was the test — and I passed.

Now the foundation is set.

From here, I’m expanding Embraced OS into a fully self-contained AI environment:

agents, memory layers, intelligent retrieval, developer tools, and a security-minded core that can live alongside existing systems or run independently.

If you’re curious about:

running AI locally

building modular agent ecosystems

unifying LLMs with real-world tooling

RAG for high-trust environments

or designing the next generation of AI-first OS concepts…

Let’s talk. This space is about to get very interesting.

Comments